Block Cut 4,000 Jobs and Blamed AI. The Truth is More Complicated.

Block just showed every CEO in America the AI layoff playbook.

A week ago, 4,000 people at Block learned they no longer have jobs.

They worked at a profitable company. A growing company. Gross profit up 24% year over year. Cash App gross profit up 33%. Block (the company that operates Square, Cash App, Afterpay, and Tidal) wasn’t struggling; it was thriving.

Block CEO Jack Dorsey tweeted his reasoning: AI.

Narrative one: AI made me do it

AI is replacing workers; the future is here, brace yourself. He said more words than that, but it’s essentially what Jack said.

AI, paired with flatter, smaller teams, will enable a fundamentally different company architecture.

This is both true and not at all the whole story.

Regardless of what was true, Block’s stock surged over 20% on the news. The market saw 4,000 fewer salaries against the same revenue guidance and did the math. They saw a CEO truly committing to AI and liked the new narrative.

For those 4,000 people, the math looks very different. They’re updating LinkedIn profiles, texting former colleagues, running the numbers on severance and mortgage payments to see if they’ll be alright on their 20 weeks’ pay plus one week per year of tenure. As anybody looking for a job will tell you, the job market sucks right now.

While everybody was running the numbers, a counter-narrative to Jack’s ‘I had to do this because of AI’ rapidly emerged.

Narrative two: Jack over-hired

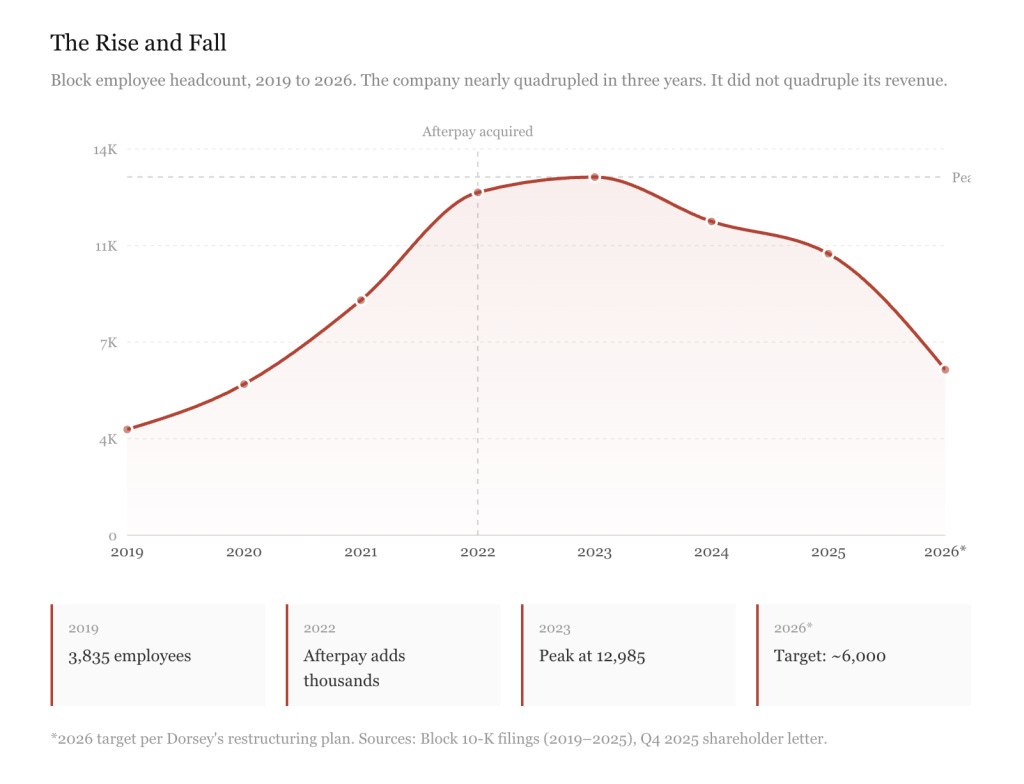

In December 2019, Block had 3,835 employees. By December 2022, that number was 12,428. The company more than tripled in three years. Some of that growth came through the $29 billion Afterpay acquisition in 2022, but organic hiring was massive too. Headcount jumped 46% in 2022 alone. Block peaked at 12,985 employees in 2023.

This was the ZIRP-era playbook in full swing: cheap capital, aggressive expansion, hire ahead of the growth curve. Block was hardly alone. Every major tech company did some version of this.

What makes Block’s version stand out is the gap between that hiring and the products it produced. Jack Dorsey is an extraordinary product creator. Twitter, Square’s point-of-sale system, Cash App: three undeniable hits. Few founders can claim even one.

But his track record as a business operator tells a different story.

Twitter is the clearest example. Jack co-founded the company, was pushed out as CEO in 2008, returned to the role in 2015, then spent years splitting his time between Twitter and Square while the platform stagnated. Twitter never evolved its core product meaningfully, monetized primarily through low-margin ads, and became one of the most notoriously bloated companies in Silicon Valley.

Jack eventually stepped down as CEO in 2021. When Elon Musk acquired the company in 2022 for $44 billion, burdened it with debt, renamed it X, and cut roughly 80% of the staff, the platform kept functioning (mostly).

CEOs around the world absorbed two lessons:

You can cut your staff far deeper than conventional wisdom and keep going

Jack vastly over-hired

Then there’s Square. Square had a dominant low-end point-of-sale system but failed to move upmarket, largely because of a strategic decision to avoid field sales reps. That opened the door for Toast and Clover to seal off the mid-market opportunity. Square only started hiring field sales within the past year, which is malpractice. Online commerce went to Shopify.

Launched in 2013, Cash App pioneered peer-to-peer payments, then watched Venmo and Zelle take mindshare (please Zelle me vs Venmo, it’s so much faster), Robinhood and Coinbase take trading volume, and Affirm and Klarna take buy-now-pay-later. The Afterpay acquisition was orthogonal at best at a massive price, took years to integrate, and still isn’t fully there.

Oh, and he bought Tidal for ~$300 million for some reason?

Tidal was acquired to put Jay-Z on the board. The company continues to invest in proprietary Bitcoin hardware, and something called ‘neighborhoods’ by Cash App—which I frankly didn’t even know existed until I saw complaints about their head of GTM building a startup for the last 8 months instead of doing his job.

Clearly, Block had overhired. But if the output wasn’t there at 10,000 people, then AI tools aren’t “replacing” productive capacity that existed. They’re providing a forward-looking narrative for a company that couldn’t translate headcount into product velocity.

Back in January 2024, Block laid off roughly 1,000 people. In March 2025, another 931 people were let go, 748 open roles were frozen, 193 managers were demoted to individual contributors, and 80 management roles were eliminated entirely. Jack sent an all-lowercase email (read it in full here) to explain:

“none of the above points are trying to hit a specific financial target, replacing folks with AI, or changing our headcount cap. they are specific to our needs around strategy, raising the bar and acting faster on performance, and flattening our org so we can move faster and with less abstraction.”

That was eleven months ago.

But AI has accelerated since then. If you tried AI coding two years back and weren’t impressed, I challenge you to try Claude Code or Codex today. People are building effective AI coworkers. I just spent the weekend having parallel agents in Claude Code wipe out my administrative backlog and automate my work reporting.

So it wasn’t a huge surprise when, in early February of this year, Bloomberg reported Block was planning to cut up to 10% of its remaining workforce as part of an “efficiency push.” This time, the cuts came paired with something new: a company-wide mandate requiring every employee to use generative AI tools daily, with AI fluency built into performance reviews. Employees were also required to send Jack weekly emails detailing their five top accomplishments, echoing the inane Elon Musk-era DOGE requirement for federal workers. Jack reportedly uses generative AI to summarize the thousands of responses.

The internal reaction was severe.

“Morale is probably the worst I’ve felt in four years,” one employee complained at a recent staff meeting, according to a Wired report. “The overarching culture at Block is crumbling.”

On the AI mandates specifically, an employee told Wired: “Top-down mandates to use large language models are crazy. If the tool were good, we’d all just use it.”

At a company-wide meeting, Jack acknowledged receiving thousands of messages about “widespread concerns about layoffs,” “performance anxiety,” and “the tension between accelerating delivery through AI adoption versus maintaining code quality and engineering rigor.” His response: a sizable portion of the departing employees had been “phoning it in.”

Three weeks later, 4,000 more team members are gone. And the framing has shifted entirely. AI is now the explicit reason.

March 2025: “We are not replacing people with AI.”

Early February 2026: “Everyone must use AI daily. It’s in your performance review.”

February 26, 2026: “AI tools have fundamentally changed what it means to run a company,” and 4,000 people are out.

AI washing

Since the 40% layoff announcement, a term has crystallized around what Block did: “AI washing.” Oxford Economics published a report in January 2026, finding that many layoffs CEOs were blaming on AI were actually corrections for overhiring. Their director of global macro research, Ben May, put it directly:

“We suspect some firms are trying to dress up layoffs as a good news story rather than a bad one, by pointing to technological change instead of past overhiring.”

Bloomberg ran a piece titled “Jack Dorsey’s 4,000 Job Cuts at Block Arouse Suspicions of AI-Washing.” And perhaps most tellingly, there’s this quote:

“There’s some AI washing where people are blaming AI for layoffs that they would otherwise do.”

- Sam Altman, CEO & Co-Founder, OpenAI

Challenger, Gray & Christmas tracked AI-cited layoff plans in 2025: roughly 55,000, or about 4.5% of the 1.2 million total. Then January 2026 saw 108,435 US job cuts, the highest monthly total since the 2009 financial crisis, and only 7,600 of them (7%) cited AI.

The skepticism became more pointed as details about the specific cuts emerged.

Block's former head of communications, writing in the New York Times, noted that the layoffs included shrinking the policy team and eliminating diversity and inclusion roles. His assessment: the reorganization "reads like standard prioritization and cost management, not an AI-driven reinvention."

Investor Marcelo Lima put it bluntly:

“Jack Dorsey is using AI as political cover to slash headcount but the reality is Block was incredibly bloated and mismanaged for years. Jack knew it, Amrita knew it, everyone knew it. They did nothing.”

Or to put it more succinctly: “This isn’t an AI story. It’s organizational bloat wearing an AI costume.”

Block’s leaders have not been happy about this, with Jack and others publicly clapping back on the social media platform he built.

But it’s hard to overcome a mismanagement narrative when you cut 4,000 employees five months after throwing a 68 million dollar company party with Jay-Z, Soulja Boy, and T-Pain performing. It’s not a good frame.

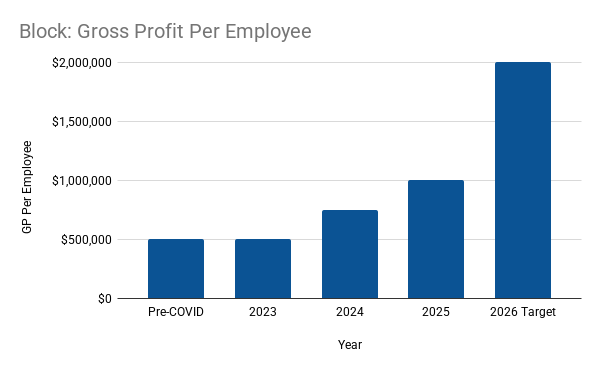

Putting the merits of the party aside (T-Pain is great live, for the record), the efficiency data does complicate the narrative.

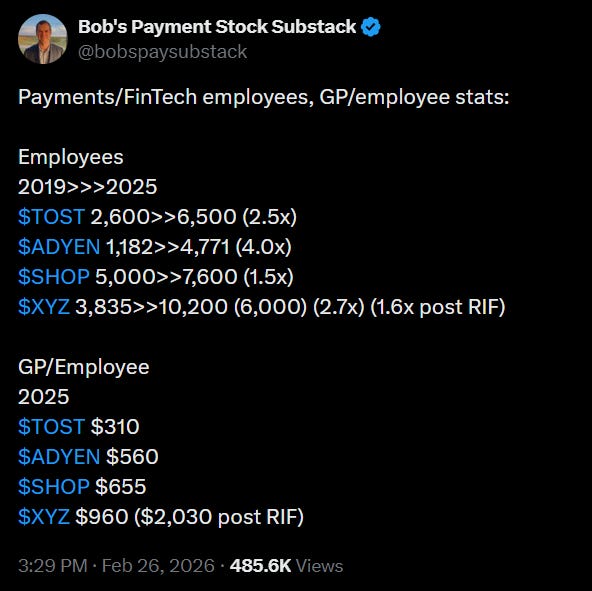

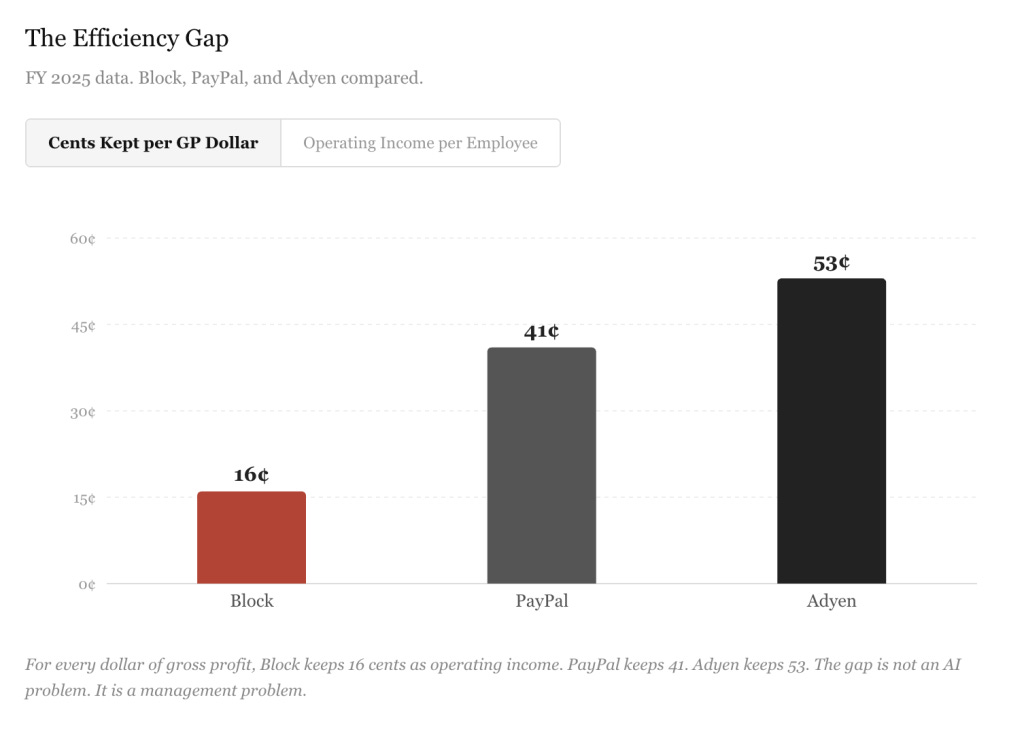

But gross profit per employee only tells half the story. A different set of efficiency metrics, comparing how much of that gross profit Block actually keeps as operating income, paints a less flattering picture. For every dollar of gross profit, Block keeps 16 cents. PayPal keeps 41. Adyen keeps 53.

So was Block bloated? Compared to its own product velocity, arguably yes. The products stagnated while the headcount tripled. Compared to its fintech peers on a gross-profit-per-head basis, it was already the most efficient company in the group. Both of these things are in the data. The story you tell depends on which metric you lead with.

But to put it all in perspective: Block at under 6,000 employees is just a bit larger than in December 2020, when it had 5,477 people. Jack isn’t cutting to some radical AI-native future headcount. He’s cutting back to roughly where the company was before he tripled it.

So was the layoff AI-washing or AI-driven?

Personally, I think it’s both, and the ratio depends on which department you were in.

What’s happening inside Block

A few weeks ago, I interviewed Angie Jones, Block’s VP of Engineering for AI Tools & Enablement, for my podcast Chain of Thought. She walked me through one of the most ambitious AI deployments in the world: rolling out AI agents to all (then) 12,000 Block employees in eight weeks. She showed me live demos of Goose, Block’s extremely cool open-source AI agent.

A developer mentions a bug in Slack; Goose identifies the code, suggests three fixes, and opens a PR: five minutes, no IDE.

The throughline of that conversation was clear: AI tools paired with smaller, flatter teams are indeed changing what it means to build software and run a company. Almost word-for-word, the language Jack used in describing the decision to cut more than 1 out of every 3 Block team members.

Agents for everyone

The deployment started with an ML engineer named Bradley who was frustrated that building internal AI tools was too slow. Early attempts failed because the team built for engineers, and the broader workforce couldn’t even get past the installation and authentication steps. People got stuck on auth flows, couldn’t configure their environments, gave up before ever using the tool. The AI was fine. Onboarding was a bottleneck.

The breakthrough came when Angie’s team made a critical decision: instead of picking one AI model and forcing it on everyone, they let employees choose. Marketing folks gravitated toward ChatGPT. Engineers preferred Claude. Some teams used local models. Their agent framework, Goose, is fully open source and model-agnostic. It doesn’t care which model you use and has no tokens to sell. That flexibility drove adoption and within eight weeks, all 12,000 employees had access.

The governance side is where Angie’s account diverges most sharply from the typical “AI deployment” press release. People’s money runs through Block’s systems. You can’t just hand every employee an AI agent with access to production databases and hope for the best.

Her team built data classification systems that define what Goose can and can’t see.

They implemented MCP tool annotations that distinguish between read-only operations (safe to run autonomously) and destructive operations (require human approval).

Authentication runs through OAuth with tokens stored in system keychains, a solution Angie herself described as “practical but temporary” while longer-term approaches are developed.

They red-teamed the deployment and found surprises.

This wasn’t a company that just threw AI at the wall. There was engineering rigor behind it, the kind of rigor you’d expect from a fintech company and the kind that’s usually absent from the “we deployed AI to everyone” announcements.

There was also one particular example Angie told me that I think was indicative of how AI adoption actually works when it’s voluntary rather than mandated. An employee who was not a developer needed to solve a specific workflow problem. She used Goose to build her own MCP server, a tool that connects AI agents to external systems and data sources. When she finished, she asked Angie’s team where to submit it. They told her GitHub. Her response: “Oh, I don’t have a GitHub account. I’m not a developer.”

She built a functioning piece of software infrastructure without identifying as technical. This is not an uncommon story.

Software for everyone

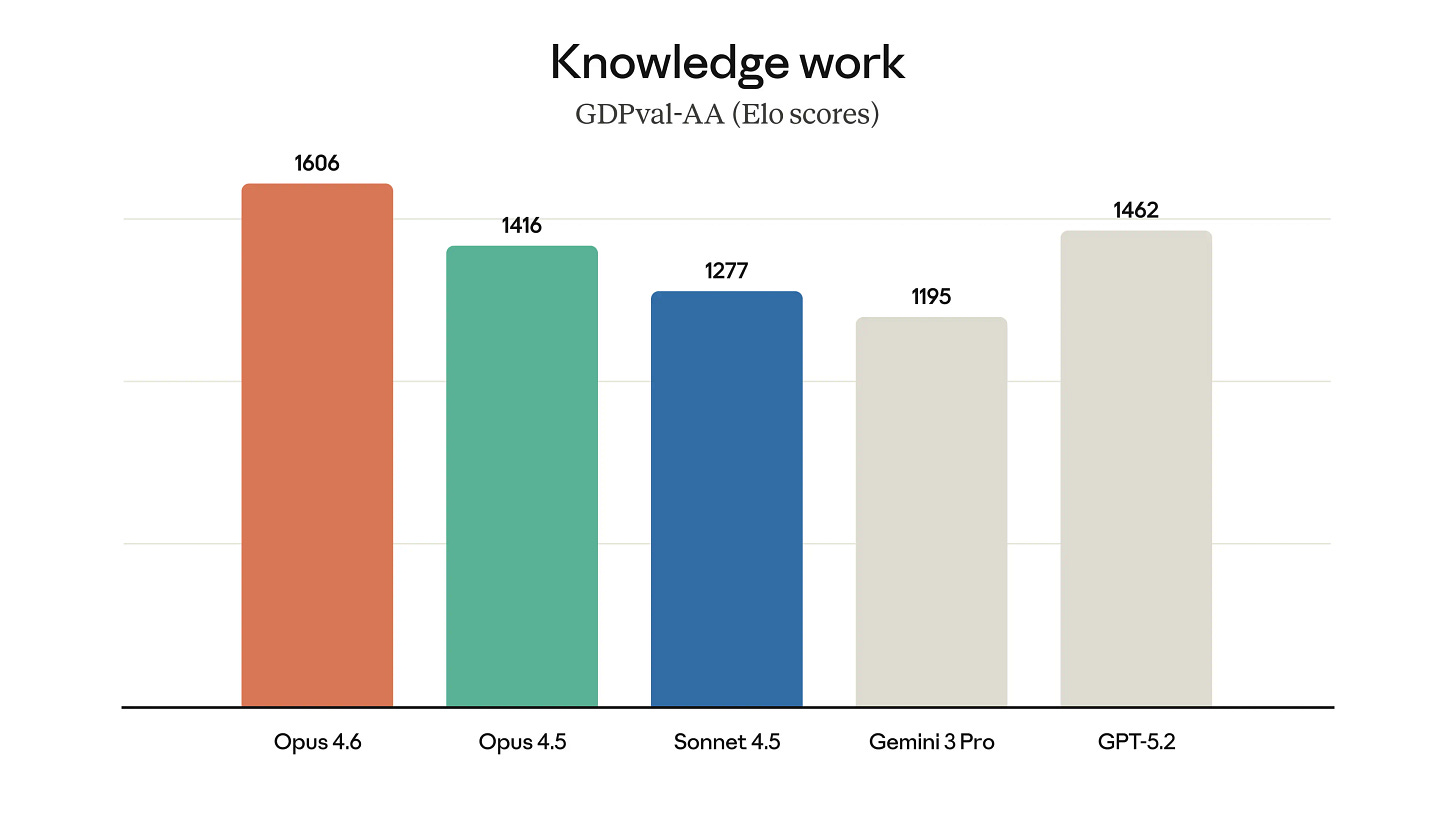

Thirteen thousand people applied to Anthropic’s recent Opus 4.6 hackathon. Five hundred were accepted.

The winners?

A California attorney who built a permit-processing app in six days.

A cardiologist from Brussels who built a patient follow-up tool without knowing how to code.

A road technician from Uganda who built a system for infrastructure assessment.

People who understood problems deeply, and in fact, outperformed peers who understood the technology deeply as Autocomplete AI wrote.

This weekend, when I needed a tightly scoped Substack MCP server for a project, all I did was ask Opus 4.6, and go back to what I was working on. A few minutes later, it had built me the MCP I needed.

The phrase ‘paradigm shift’ has been overused in recent years, and is overused by AI models in writing, but I am hard-pressed to find other words that describe what is happening in coding today without sounding hyperbolic.

Anybody can build anything with software today, in natural language. The barrier to creation in software has never been this thin. AI coding has advanced with leaps and bounds. If you told me in 2020 what I’d be able to do with AI coding today, I’d think this was AGI. Coding is basically solved.

We have a set of amazing problem-solving tools at our fingertips. Deploying them properly, using them accurately, and effectively? Yes, that takes effort, infrastructure, and consideration. But they are increasingly impressively capable.

In an interview with Wired’s Steven Levy published after the layoff, Jack was more specific about the trigger than in any of his public posts. He pointed to a shift in December, naming Anthropic’s Opus 4.6 and OpenAI’s Codex 5.3 specifically. The models, he said, had gone from handling greenfield projects well to being effective on larger, established code bases. He described this as presenting “an option to dramatically change how any company is structured.”

When Levy asked directly whether this was AI washing, Jack pushed back and suggested that organizational bloat is “a function of inheriting a management structure and a hierarchy all the way back from the 1900s.” Levy’s response was immediate: “You built Block from scratch, Jack. It’s your company.” Jack’s answer: “Absolutely.”

Defending the decision

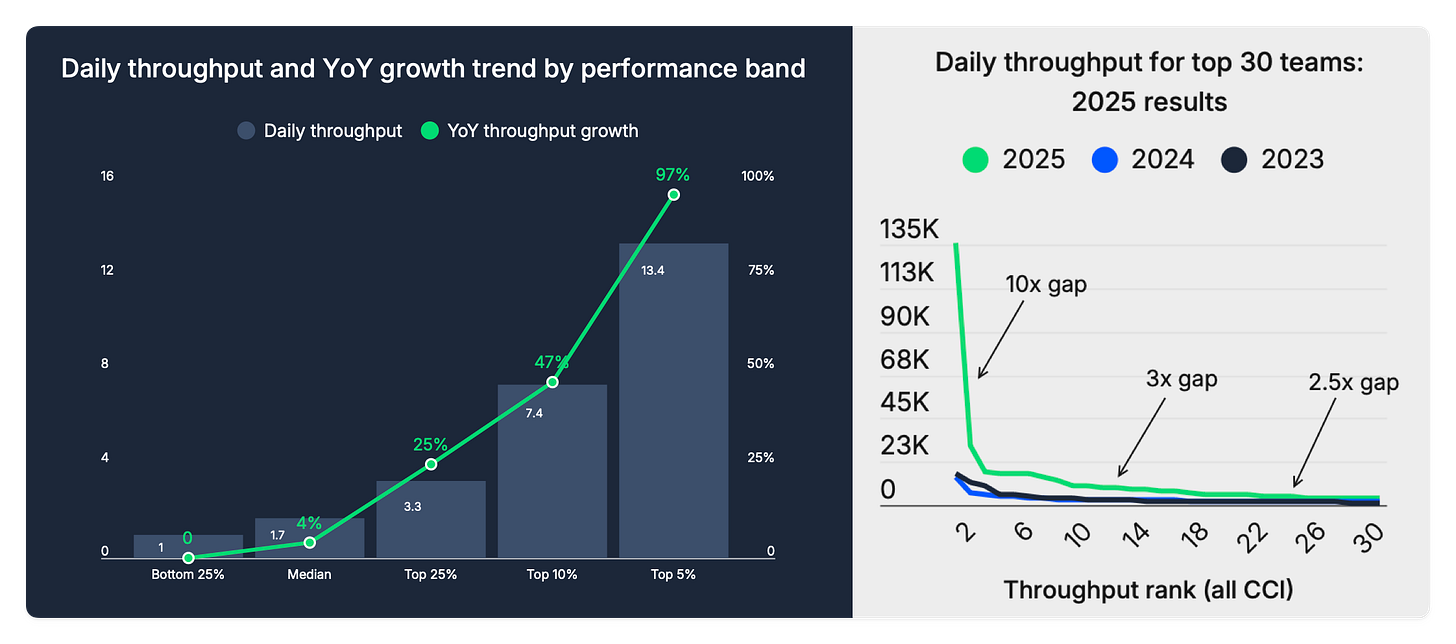

Since the layoff announcement, one of the few insiders to speak publicly with specific numbers is Cam Worboys, head of product design at Cash App. His account complicates the “AI washing” narrative in ways that are hard to dismiss.

In August 2025, Cash App’s design team shipped 36 PRs. By the last week of February 2026, they’d crossed 1,000 in production. Weekly averages climbed from 21 in December to 54 in January to 124 in February. That’s a 6x increase in output over three months.

Throughput is not everything - but it is certainly something.

Worboys also described the role transformation happening in practice. Product managers are writing copy. Data scientists are shipping features. Designers are functioning as directly responsible individuals more than pixel-pushers. He estimated that two years ago, a business of Cash App’s scale would need around 125 product designers. Today he thinks the number is closer to 25. “The tools aren’t perfect,” he wrote, “but individual leverage has 10x’d.”

His framework for understanding the shift: roughly 80% of work on most roadmaps involves improving things that already exist. Defined surfaces, clear scope, automatable. The other 20% requires invention, uncharted territory where craft and taste determine the outcome. AI handles the 80%. Humans focus on the 20%.

Whether you find this inspiring or alarming probably depends on which side of the layoff you’re on. For the designers and engineers who remain, it’s a vision of higher-leverage, more creative work. If you can get over the shock of losing your colleagues, perhaps there is a bright future ahead.

For the ones who were let go, it’s a senior leader at their former employer publicly arguing that a fraction of the old team can do the job. Both readings are valid. The production numbers, at least, are specific.

As Block’s leadership shifted into a press defensive, Block’s CFO Amita Ahuja gave a detailed defense in an interview. She laid out the gross profit per employee trajectory:

roughly $500,000 pre-COVID

flat through 2023

climbing to about $750,000 in 2024

$1 million in 2025.

2026 goal: $2 million per employee

Ahuja also provided context that most coverage has missed. Goose, Block’s internal AI agent, has been in production for approximately 18 months, predating the layoff announcement by over a year. Since September, she said, developer productivity has increased with a 40% rise in per-engineer AI tool usage for pushing code and features to production. A risk underwriting model that previously took a full quarter to build was completed in a fraction of that time. Block simultaneously raised its 2026 outlook: gross profit expected to grow 18% year over year, with profits climbing 54%.

These are specific, testable claims from a public company CFO. They’ll either hold up over the next four quarters or they won’t.

The question is what all of this evidence actually proves, and what it doesn’t.

Missing math

The productivity numbers are credible. I believe what Angie described. What I’m less certain about is the inferential leap from “employees save 50 to 75 percent of their time on common tasks” to “we need 40% fewer people.” Those are different claims.

Wharton professor Ethan Mollick, one of the most respected voices in AI research and someone who is broadly optimistic about the technology, put it plainly on LinkedIn:

“Given that effective AI tools are very new, and we have little sense of how to organize work around them, it is hard to imagine a firm-wide sudden 50%+ efficiency gain that justifies massive organizational cuts.”

He recommended taking the AI justification “with a grain of salt.” When someone who has spent years studying and advocating for AI in the workplace says the efficiency claims don’t add up, it’s worth paying attention.

Writer Om Malik sharpened the math further. Block’s 2026 guidance implies each remaining employee will need to produce 2.6 times what they did in 2025. That’s a 160% productivity jump in a single year, which seems challenging to say the least.

There will be vast organizational challenges from all the lost team members, and from trying to transition to a new way of working.

Where does the time go

When an employee saves 50% of their time on a task, where does the reclaimed time go? There are three possibilities, and each leads to a very different headcount outcome.

Possibility one: they do more. The same number of people ship more features, clear more tickets, and build more products. Headcount stays flat, output goes up. This is the productivity multiplier story, and it’s the one most engineering leaders would prefer to tell their teams. It’s also the one Angie’s examples in our conversation mostly support. The engineer who goes from bug-to-PR in five minutes doesn’t disappear. She handles more bugs, or shifts to higher-leverage work.

There is a tension in this option.

"If AI truly makes employees twice as effective, the ROI of each engineer increases, meaning you should want more engineers, not fewer. It requires us to accept that there's only a fixed amount of software engineering to be done, no new ideas, no unfinished business, nothing in the backlog." - AICE Labs Analyst

In other words, the "do more" path only fails as a business case if you believe there's nothing left to build. For a company like Block, which lost ground to competitors across nearly every product line, that's a hard case to make.

Possibility two: you need fewer. The same output gets maintained by fewer people. Headcount goes down, output stays flat. This is the story Wall Street is pricing into Block’s stock today. It’s the margin expansion trade. And it’s the story Jack is telling when he says “smaller and flatter teams” can “do more and do it better.”

Possibility three: the role changes. Some tasks within a role get automated. The role itself doesn’t disappear, but it transforms. Fewer people are doing the old version of the job, and more people are doing a new version that looks different. Junior roles compress. Senior roles expand. Management layers thin. This is the most likely outcome in practice and the hardest one to plan for.

It’s also something that many of us believe we are already seeing in hiring patterns.

At Block, it looks like Jack chose door number two for most of the company and door number three for whoever’s left. The 4,000 who are gone aren’t being “replaced by AI” in the literal sense. The remaining 6,000 are expected to produce the same output (or more) with AI tools filling the gaps. Whether that works depends entirely on whether the tools can actually absorb that load and whether the remaining employees have the organizational support to adapt.

There’s also a revealing tension in the reporting. Angie described enthusiastic adoption: employees voluntarily building tools, saving hours, and excited about what they could do. The anonymous employees quoted by Wired weeks before the cut told a very different story: “Top-down mandates to use large language models are crazy. If the tool were good, we’d all just use it.”

Both accounts are likely true. The people closest to Goose and Angie’s AI tools team were probably seeing meaningful wins. Other parts of the organization experienced the AI mandate as surveillance theater layered on top of rolling layoffs. The gap between those two experiences tells you something important about how AI deployment actually works inside large companies. The technology can be impressive, and the rollout can still be a mess. The tool can save time, and the mandate can destroy morale. These things coexist.

The layoffs can be both AI-washing and AI-driven.

The time savings are probably directionally correct. AI tools do make certain tasks dramatically faster. The question is whether you use that efficiency gain to build more, to cut, or to restructure. That’s a leadership decision, not a technology decision.

And the incentive structure just shifted, because every board in America watched Block’s stock jump 22% when they chose door number two.

What Wall Street’s reaction tells us

Block reported Q4 2025 earnings the same day as the layoff announcement. The numbers were solid: gross profit up 24% year over year, Cash App gross profit up 33%, 2026 guidance of $12.2 billion in gross profit and $3.66 adjusted EPS. The company hit analyst expectations.

But the stock didn’t jump 20% on the earnings. It jumped on the headcount cut.

What Wall Street is pricing here is straightforward: a profitable, growing company just eliminated 40% of its operating cost structure while maintaining revenue guidance. That’s a margin expansion story. Whether AI is “the reason” or “the cover” is irrelevant to the trade. The math works either way.

There’s a third explanation for the layoffs that neither the AI optimists nor the bloat critics are discussing, and it may be the most structural. Anonymous investor @buccocapital laid it out in a longform tweet that’s been viewed over 223,000 times. His argument: calling these “AI-driven layoffs” is air cover for a financial problem that has nothing to do with AI capability.

The core issue is the math of stock-based compensation in a down market. Many software companies staffed up during COVID with equity-heavy compensation packages. Valuations have since reset, with investors now focused on free cash flow minus stock compensation.

For a surprising number of mature software companies, that adjusted number is close to zero. BuccoCapital used Atlassian and HubSpot as examples: 24 and 19 years old, respectively, 14,000 and 9,000 employees, and SBC-adjusted free cash flow that is, in his words, “basically ZERO.”

These companies face a double bind. Technical talent needs to get paid. Stocks are down 60-70% from recent highs. If you keep paying people in stock, dilution continues, and the stock gets punished further. If you switch to all-cash compensation, there’s no free cash flow left.

The only way out is fewer people.

And if you’re going to lay people off, well, AI sounds better than ‘we have financial problems’. Look at how much Block’s stock jumped.

The implications and the template

This framing doesn’t require you to believe AI is overhyped. It says: even if AI is doing everything Jack claims, the layoffs were structurally inevitable because the financial model of paying large software workforces with equity in a down market has stopped working. AI provides the narrative that makes the stock go up instead of down when you announce the correction.

That’s exactly what happened at Block. It will be a template for coming layoffs.

Jack himself said it in his shareholder letter: “Within the next year, I believe the majority of companies will reach the same conclusion and make similar structural changes.” Pinterest, CrowdStrike, and Chegg have already attributed layoffs to AI. Shopify CEO Tobi Lutke told employees in 2025 that teams must prove they can’t accomplish a task with AI before requesting additional headcount. Klarna celebrated AI replacing 700 customer service agents.

What got less attention: Klarna later had to rehire workers because the AI capabilities weren't robust enough to handle the workload. CEO Sebastian Siemiatkowski, speaking at a conference two weeks before Block's announcement, described a more measured approach than his earlier headlines suggested. Klarna reduced headcount by about half since 2022, mostly through natural attrition and a hiring freeze, letting the 20% annual turnover rate do the work over time without mass layoffs. The contrast with Block's approach is instructive. Klarna shrank gradually and discovered the limits of AI along the way. Block cut 40% in a day and is betting those limits won't matter.

The mechanism is clear. Block frames its cuts as an AI-native transformation. The stock pops 22%. Every board of directors in tech watches what happens. The playbook writes itself.

There’s a harder edge to this too. Finn Murphy articulated something most tech commentary won’t touch: big tech made a deal with society. Create a class of well-paid knowledge workers, let your founders and shareholders become wealthy beyond imagination, and society mostly leaves you alone. “Google could cut 90% of the headcount and generate 90% of the revenue,” Murphy wrote. “AI may be the excuse but this has always been an option.”

His warning is worth quoting: the displaced workers from this wave will be more politically potent than any previous wave of automation-displaced labor. They’re credentialed, networked, and accustomed to influence. They won’t accept their displacement quietly. How companies handle this transition will shape whether the regulatory response looks like collaboration or punishment.

The macro shadow

Four days before Jack posted his letter, a piece of financial writing went viral that now reads like it was scripted as prologue. “The 2028 Global Intelligence Crisis” by Citrini Research and Alap Shah is a thought experiment written as a fictional macro memo from June 2028, looking back at how AI-driven layoffs cascaded into a systemic economic crisis. It has over 8,000 likes and 1,600 restacks on Substack. Goldman Sachs and Citadel are circulating it internally. Reddit, policymakers, and institutional investors alike have all read it.

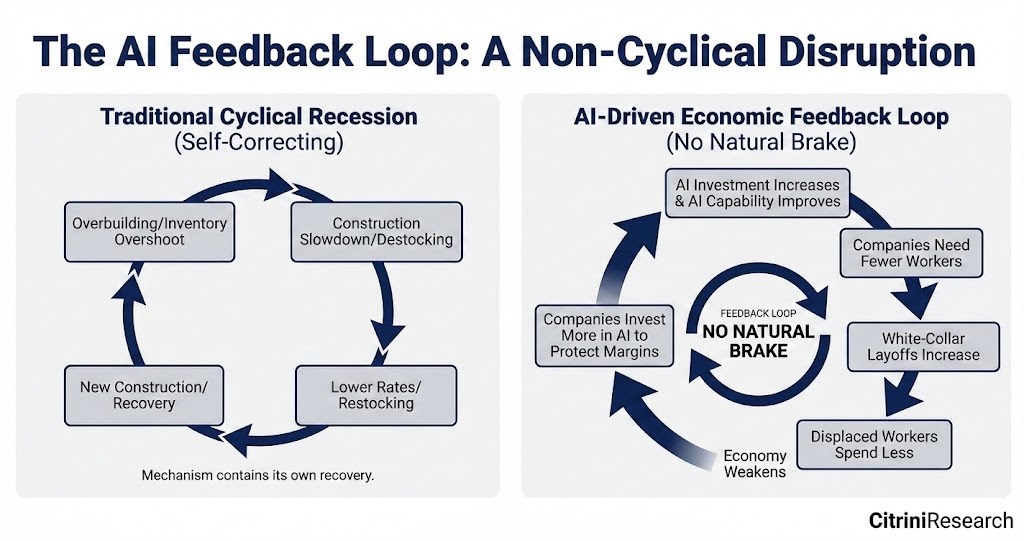

It’s a scenario, and the authors are explicit about that. They disclaim prediction entirely. Yet it’s resonating because it maps a feedback loop that starts with exactly what happened at Block today.

The loop works like this: AI improves, companies cut headcount, savings flow into more AI capability, AI improves further, companies cut more headcount. Displaced workers spend less. Consumer-facing businesses weaken. Those businesses invest more in AI to protect margins. The cycle accelerates. Unlike a typical recession, there’s no natural brake. The cause of the downturn keeps getting better and cheaper.

This cycle operates as OpEx substitution, not CapEx buildout. A company that spent $100 million on employees and $5 million on AI now spends $70 million on employees and $20 million on AI. Total spending is down. AI spending is up. Every company’s AI budget grows while its overall budget shrinks.

The Citrini piece specifically opens with early 2026 layoffs at profitable companies doing “exactly what layoffs are supposed to. Margins expanded, earnings beat, stocks rallied.” Block is that scene, playing out just days after the essay went viral. The piece even discusses payments companies facing disruption from AI agents routing transactions through stablecoins instead of intermediaries. Block is a payments company. The layers of irony are hard to ignore.

Even if AI can’t actually replace all the work companies think it can, the belief that it can is already reshaping negotiations, hiring, and market dynamics. The perception drives economic impact regardless of whether the technology fully delivers.

And then there’s a question of price. Will inference costs go down, or will they climb as inference providers need to raise prices to turn a profit? The current AI cost structure may look like the early days of Uber, where rides were subsidized to drive adoption. The economic case for AI replacement depends partly on AI staying cheap, and that’s an assumption, not a certainty.

You don’t need to believe the Citrini scenario plays out to its full extreme. What you need to do is stress-test your own organization against it. Are you thinking about the second-order effects on your customers and market? Because the boards of public companies are.

What this all means

So what are you supposed to do with all of this?

The instinct will be to pick a side. AI changes everything, or AI is overhyped, and this is just cost-cutting. Resist that instinct. The evidence supports an uncomfortable middle: AI is changing the math on team composition, AND companies overhired during ZIRP and are using AI as a convenient cover, AND the stock market just created a powerful incentive for every CEO to follow Jack’s playbook.

All three of those things are operating simultaneously. Here’s what I’d think about.

The question has shifted. It’s no longer “will AI affect my team’s size?” It’s “on what timeline and in what ways?” If you’re an engineering VP or director, the conversation about your team’s structure is coming whether you initiate it or not. The only variable is whether you’re the one framing it or whether it gets framed for you by a CEO who just watched Block’s stock pop 22%. Start modeling what your organization looks like with 20% fewer people and better tools. Start modeling what it looks like with the same people and 2x the output. Have both plans ready, because your leadership team will ask for one of them within the next twelve months.

Deploy before you cut. The Angie Jones interview is a useful playbook here. The companies that invest in making their existing people dramatically more productive with AI tools will outperform those that just cut headcount and hope the remaining people figure it out. Block’s anonymous employees complaining about top-down AI mandates are a warning: adoption that feels like surveillance breeds resentment, not productivity. Angie’s team got adoption by letting people choose their own models, solving their own problems, and building their own tools. The mandate approach, the weekly emails to the CEO, and the AI-fluency-in-your-performance-review approach produced cratering morale and employees quietly heading for the exits. If you’re deploying AI tools to your team(or any tools for that matter), the method matters as much as the technology.

The most striking part of Jack’s post-layoff interview with Wired wasn’t the defense of the cuts. It was his vision for what comes next. “If I were to build a company today I would have no management hierarchy whatsoever,” he told Steven Levy. “The company itself would be focused on all the artifacts of the work that we’re creating, with an intelligence layer on top that everyone in the company could have a conversation with and query and build intent into.”

“I want the company itself to feel like a mini AGI.”

He described a future where Block’s customers would create their own products and customizations, essentially vibe-coding the company to meet their specific needs.

When Levy suggested this aligned with predictions about half of white-collar work disappearing, Jack hedged on the timeline while doubling down on the direction: “I do believe that people will shift into other types of roles and work. But I can more or less guarantee the role of a company is going to be markedly different.”

When Levy asked whether he was trusting the remaining 6,000 employees to self-organize after losing half their colleagues, Jack said “Absolutely.” He called the shift “existential” and said every company that isn’t building itself as intelligence “is going to face something existential, and it’s going to happen over the next year or two.”

This is either the most clear-eyed strategic vision in tech right now, or it’s a CEO who just cut 4,000 jobs, rationalizing the decision with increasingly abstract language about the future. The distance between those two readings is where every leader should be spending their time.

Watch Block over the next several months. The signals will be specific. If Block executes well at 6,000, every board in tech will treat this as precedent. If they struggle, it becomes a cautionary tale. I’ll be watching:

Square’s uptime and incident response times. If a 40% headcount cut affects reliability at a payments company, it will show up here first.

Cash App’s app store ratings and customer support response times. These are lagging indicators, but they’re public.

Employee attrition among the remaining 6,000. If Block’s best engineers leave because the workload became unsustainable or the culture never recovered from “crumbling,” the AI-efficiency thesis is damaged.

And product velocity: does Block actually ship new features, or does the smaller team spend all its time maintaining what exists? Jack’s letter promised the remaining team would “do more and do it better.” That’s a testable claim. We’ll see.

The Shopify model deserves serious attention. Tobi Lutke’s directive (prove AI can’t do it before requesting headcount) is a more nuanced version of what Block is doing. It starts with the assumption that every new task should be attempted with AI first, and only when that fails do you add a person.

Over time, that changes team composition gradually rather than through a single traumatic event. It also creates organic learning: every failed attempt teaches the organization where AI actually works and where it doesn’t. If you’re looking for a playbook that doesn’t involve laying off 40% of your company and hoping for the best, this is closer to it.

Whoop offers an even sharper contrast. While Block was cutting 40% of its workforce, Whoop CEO Will Ahmed announced the fitness wearable company would nearly double its 800-person headcount this year.

His commentary on the broader trend was pointed: "There are a lot of companies that are doing layoffs right now and blaming it on AI. But they're actually doing layoffs because the businesses aren't performing particularly well. And it's a convenient excuse." His argument: investing in talent and investing in AI tools are decisions that can be made independently. One doesn't require the other.

Don’t underestimate the social contract. The displaced workers from this wave are credentialed, networked, and accustomed to influence. They’re Stanford-educated directors of product, senior engineers with extensive networks, people who know how to organize, lobby, and write op-eds. Finn Murphy’s framing is vivid, particularly in the context of broad social frustration, the rising prices brought on by illegal tariffs, and ballooning oil prices from war with Iran:

“the Robespierre of today is a forty year old Stanford educated father of 4 who contributed to the Review about to lose his director role in Big Tech.”

He’s describing someone with skills, connections, and motivation. How companies handle this transition will determine whether the regulatory response is collaborative or adversarial. The companies that treat displaced workers with dignity, provide meaningful transition support, and are honest about their reasoning will face a very different political environment than those that frame layoffs as visionary AI strategy while their stock price jumps.

One more thing. If you’re one of the 4,000 people affected by today’s announcement and you’re reading this: the skills you built at Block are in demand. The fact that a CEO decided your role was redundant to an AI tool does not mean your capabilities are redundant. Companies that are thoughtful about AI deployment need experienced people who understand what it takes to build and operate software at scale. That knowledge doesn’t become less valuable overnight.

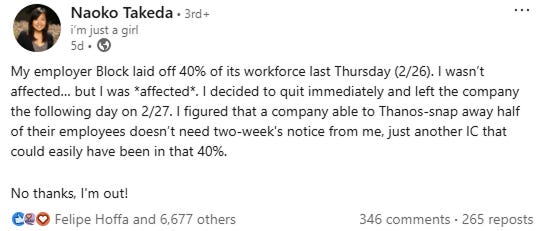

In the days after the announcement, one story captured something that the stock charts and headcount numbers can't. Naoko Takeda, a data scientist on Block's Cash App team, survived the cuts. She was offered a 90% pay raise to stay. She quit within 24 hours. Her LinkedIn post explaining why went viral: she said she would rather see her peers keep their jobs than personally profit from their departure. Whether you agree with her decision or not, Takeda named something that the productivity metrics and margin expansion math tend to obscure.

There are people on both sides of a layoff who are making decisions based on values that don't show up in a gross-profit-per-employee calculation. The 4,000 who were cut are navigating a brutal job market. The 6,000 who remain are navigating a workplace where the culture was already described as "crumbling" before the cut.

Both groups deserve more than a bumper sticker about the future.

If you want to understand what Block’s AI deployment looked like from the inside, the full Angie Jones conversation is worth your time. Watch on YouTube or listen on Apple Podcasts or anywhere else you get your podcasts.

Note - this newsletter used to be called Test Lab - to simplify things, I’ve consolidated it under the Chain of Thought name.