Inverting the Innovator’s Dilemma with Open Source AI Agents

Open source AI is accelerating and incumbents like Salesforce are adapting to stay ahead

The tension between open source and closed software has been there for years - five years ago if you asked Salesforce to open-source their core orchestration language, they’d have looked at you like you were crazy.

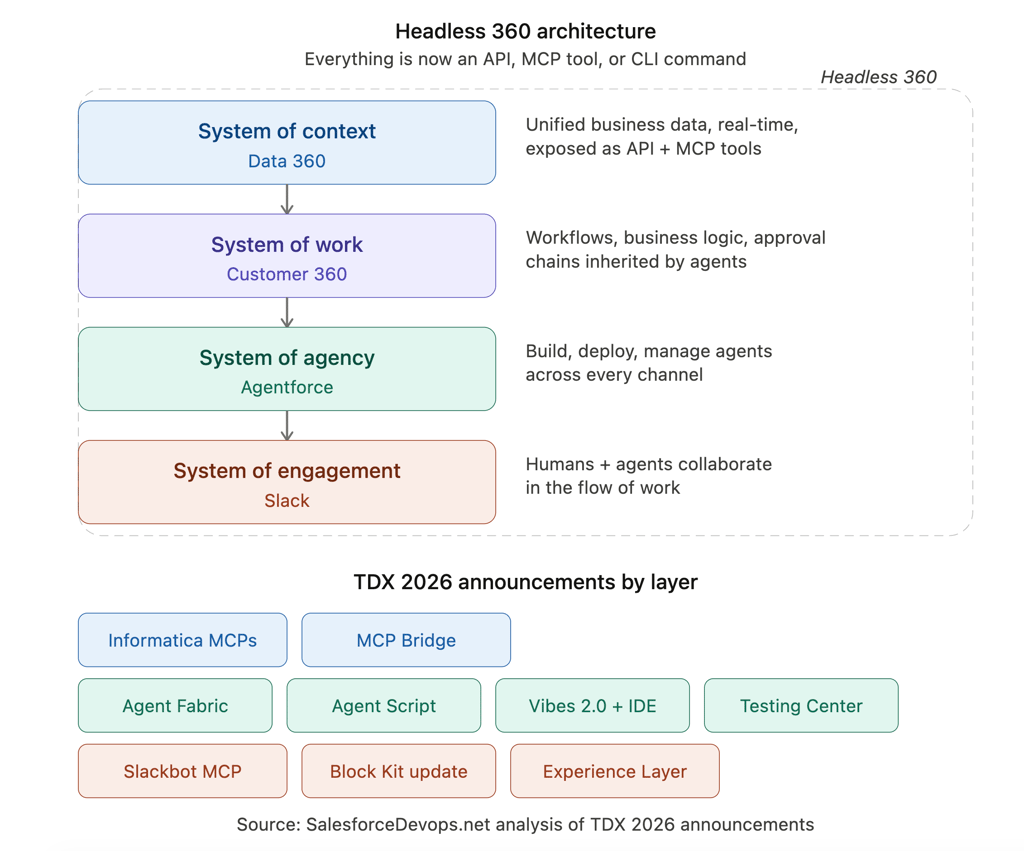

Yet on April 15th at Salesforce TDX, they did just that: publishing Agent Script’s full language spec, grammar, parser, and compiler to GitHub. Nor was it even the biggest announcement of the week, as they also shipped Headless 360: 60+ MCP tools and 30 preconfigured coding skills that let developers call Salesforce from Claude Code, Cursor, Codex, or Windsurf without ever opening a browser.

Amidst all the announcements, it’s crucial to understand why one of the most successful enterprise providers in history abandoned years of closed-source moat to embrace a new, open strategy. It’s also important to realize that Salesforce is not the only incumbent leaning into open source, and far from the only company taking an open-first approach.

Why? Software is just tokens now.

Software = tokens

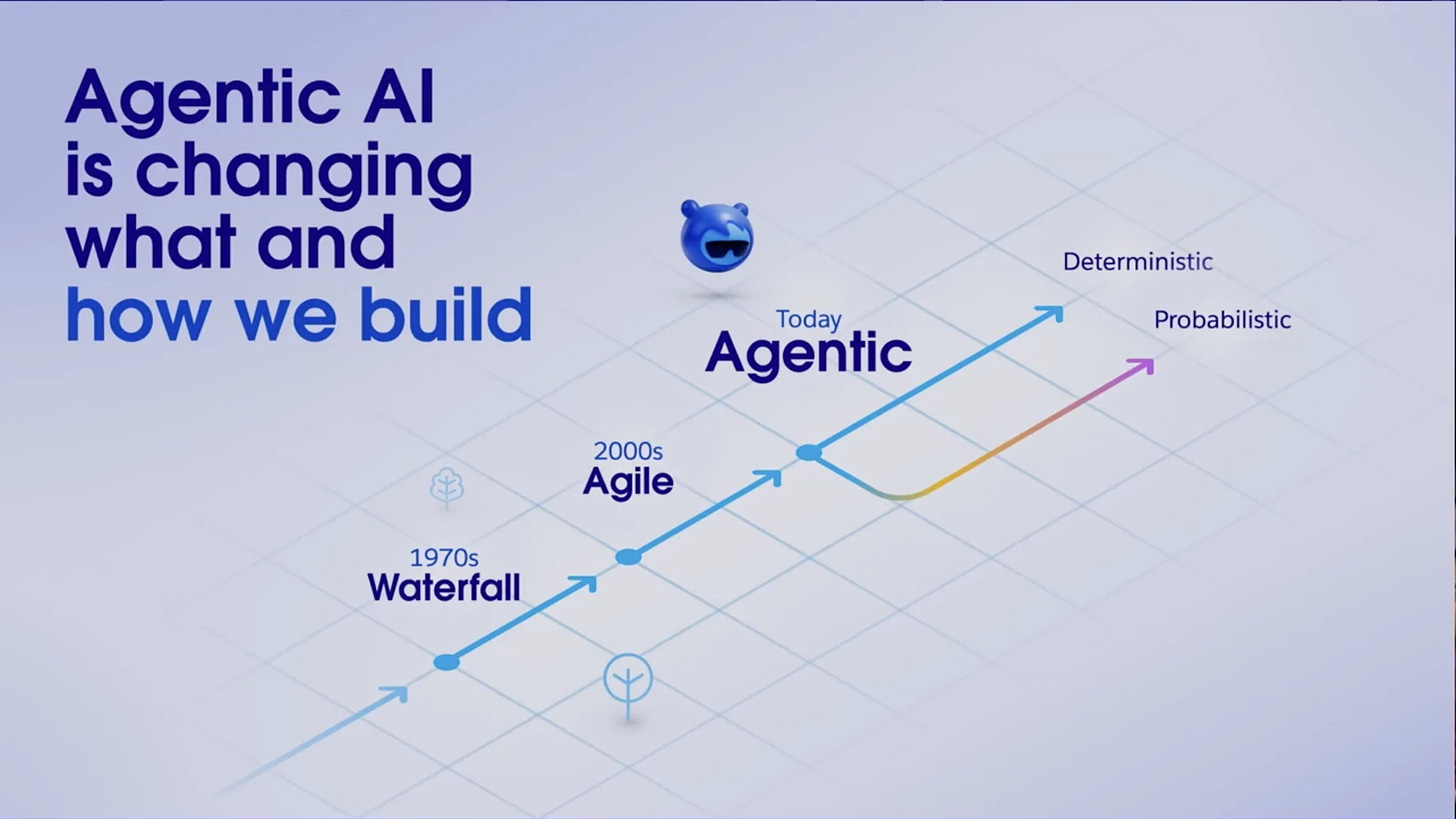

In the last year, the way software is built has radically changed, with no signs of slowing down. Agentic coding has opened up software development to millions more people, and it’s accelerated top engineers to a throughput pace we’ve never seen before. Software is now produced in the same currency as everything else AI generates: tokens. The cost curve is, for now, collapsing accordingly.

The TDX keynote directly addressed this with Joe Inzerillo, President of Enterprise and AI Technology at Salesforce, highlighting the shift: the 80-20 rule has flipped. We can get to a ‘working’ product so much faster than before.

The problem is that when competitors can rebuild your stack at agent speed, your incumbency stops protecting you, and instead puts a clearer target on your back: you’ve already established there is a market that people will pay for. Disruptors have every incentive to try, and the barrier to product creation has collapsed. For that matter, the competition posed by ‘we’ll just build it internally’ has never been more credible.

Companies are adapting to that future - and acknowledging that the basics of agents are easy to spin up. There are a million and one agent frameworks. Every software company is enabling them. There are new MCP tools built for them every day (I’ve even built a few myself) and the explosion of projects like OpenClaw and Hermes are driving a golden age of open source agent harnesses and agent development.

Yet open source has not ‘won’ AI. There is a lot of game left, and frontier model labs are still ahead, even if the time in the lead for their leading models has lessened. But one of the most closed-source-native incumbents in enterprise software just decided the closed playbook was not going to carry it through the agent era. The fastest growing software projects in the world are open.

Open source growth stories are so important that companies are buying fake GitHub stars to raise new funding rounds, as VCs are often using these stars as proxies for interest. There is statistical evidence of a correlation between GitHub engagement and startup funding outcomes with a 15% increase in the likelihood of a startup having raised a financing round based on them being active on GitHub.

All of this focus on open source, and the enablement of developers everywhere to ship ever more open source code, is driving incumbent companies to open up their stacks, they have the most to lose if they don’t.

Salesforce’s strategic pivot

I spoke with Nancy Xu, VP Agentforce & AI, and she tipped why Salesforce has changed their strategy: Agentforce is now the fastest-growing product at Salesforce. Her bar for enterprise production: a customer-facing agent runs a million conversations a month, and a million out of a million need to follow your business rules. Regulated businesses won’t tolerate reliability failures in production, and they’re embracing AI in a big way. Since I wrote my 2024 essay on the rise of AI agents, we’ve seen exponential growth in the number of agents being deployed, and as Agentforce’s continued growth shows, that trend is accelerating, not slowing down.

Salesforce is embracing a future where software is cheap to build, and is being built constantly: with their Agentforce Vibes 2.0 to enable vibe coding on their infrastructure, and a $50M Builders Fund.

These are all signs of the broader, radical strategic shift they’ve made: Salesforce has decided they need to win open source to win the future. And if they are successful in bringing developers into an open Salesforce ecosystem, their enterprise ready capabilities - ones that actually enable agentic systems at scale to be reliable - position them to capitalize.

For more on the shift in how software is being built and the importance of throughput speed as a moat + leaning into the new software paradigm, I spoke with AMD’s CVP of AI Software Anush Elangovan on the podcast this week:

What Salesforce actually shipped

Among the many new announcements, there are three key moves Salesforce made at TDX that speak to their embrace of this new approach:

Agent Script on GitHub is a typed, structured language for defining agent behavior: when the agent reasons with an LLM, when it follows deterministic logic, what subagents it can call, what variables it tracks, what guardrails constrain it. Publishing the full spec, grammar, parser, and compiler is not a token gesture. It’s a language-level abstraction, designed for stickiness.

Headless 360 exposes every Salesforce capability as an MCP tool, a CLI command, or an API. Every integration Salesforce has built over twenty-five years is now an addressable tool for somebody else’s agent. The explicit target is the developer who uses Claude Code or Cursor or Codex all day and has never touched a Salesforce admin console. Salesforce is going where the builders already are, not asking them to come to the Trailblazer portal.

Agentforce Vibes 2.0 defaulting to Claude Sonnet 4.5 is a concrete coding-quality bet, and that bet is Anthropic’s. Developers can swap to GPT-5 or Salesforce’s own models, but the default tells you which model Salesforce trusts to write code against its platform. Schema-aware code generation means Vibes knows what OpportunityLineItem means in your specific org, a context advantage generic tools can’t replicate without the data layer Salesforce spent a quarter century building.

All three moves read as one thesis: open the stack to win developer adoption, monetize the enterprise-grade runtime where production actually lands, enable Slack agents where teams spend their days. Adoption runs through GitHub. Revenue runs through Agentforce. Slack is further locked in as the surface where work happens. The upgrade path has a clear connector: the same Agent Script you run on your laptop ships to AgentForce’s hosted runtime with audit logs, session tracing, deterministic guardrails, and regulatory controls baked in.

That’s the Kafka (Confluent), Elasticsearch (Elastic), MongoDB playbook, applied to the agent layer.

This is also a deliberate bet on devs over Salesforce traditional Trailblazers audience: the admins and low code power users who powered the Salesforce ecosystem for the last two decades.

Three companies, one inversion: incumbents opening

Salesforce didn’t do this in isolation.

On April 22, I published Chain of Thought Ep 56 with AMD’s Anush Elangovan and he was blunt about his opinion: the software moat is dead. When agents rewrite your competitor’s stack faster than network effects can compound, owning the moat matters less than controlling the substrate beneath it. AMD’s answer is open source: ROCm, MI300X, an open-weights posture, all deployed as a wedge against Nvidia’s proprietary CUDA moat. For AMD, staying closed means losing, as they shared with me last year.

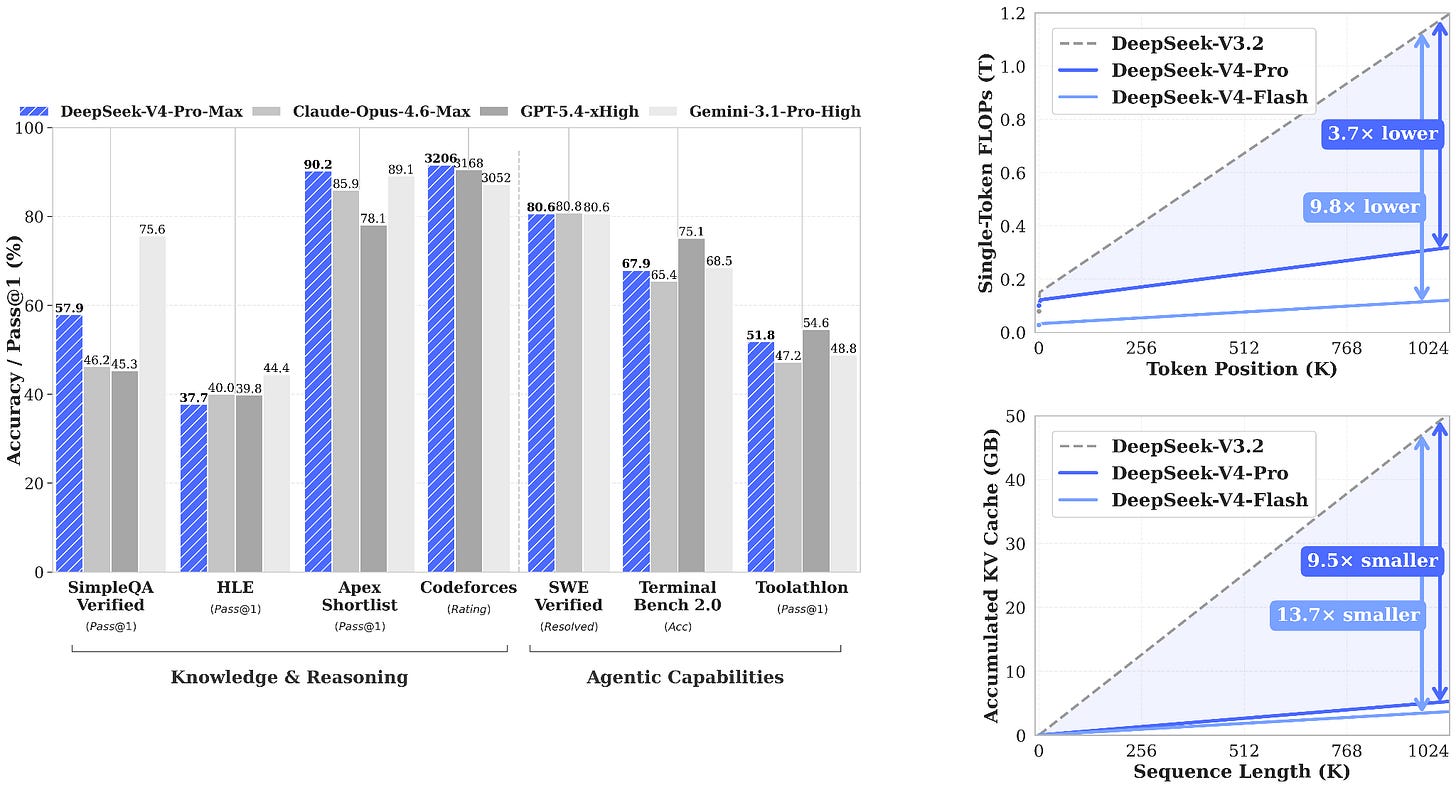

Two days later, DeepSeek shipped V4. 1.6 trillion parameters, MIT license, a million-token context window, live on Hugging Face day one. The Chinese lab that created a seismic reckoning about proprietary frontier-model moats a year ago just released a model that exceeds most closed-source benchmarks and gave it away. For DeepSeek, staying closed would mean ceding the ecosystem to labs that can outspend them.

Three incumbents at three different layers of the AI stack: hardware, model weights, and enterprise orchestration. There’s a shared pattern across these three distinct companies: open source is winning at layers where the substrate is more valuable than the surface, and the companies who understand their own layer best are the ones opening it up fastest.

This is the Innovator’s Dilemma inversion. Clayton Christensen’s model was that incumbents get disrupted from below by cheaper, simpler entrants who eventually move upmarket. In the agent era, incumbents are preempting that disruption by opening the layer that would otherwise be used against them.

If LangChain, CrewAI, or Mastra become the default agent-orchestration standard, Salesforce risks becoming a stranded proprietary platform nobody wants to integrate with. If open-hardware-inference consolidates around Nvidia, AMD is locked out forever. If closed Chinese frontier models stay opaque, Western procurement blocks them on policy grounds before they ever reach deployment.

For all three companies, open source is the least-bad strategic option.

What this means

If you are building an AI-native agent startup, the window just got narrower. Frameworks like LangChain, CrewAI, and Mastra have roughly twelve to eighteen months to become sticky enough in enterprise before incumbent platforms stacks like Agentforce’s Agent Script become the default mental model developers learn first. Being the open alternative to the incumbent stops working when the incumbent is the open alternative.

You can see this rapid change in incumbent reaction by looking back at agent discussions from just nine months ago - re-listen to my conversation with CrewAI’s CEO João Moura and think: what did they get wrong?

Engineers choosing what to learn have a freer-than-usual optionality bet in Agent Script on GitHub. You can adopt the mental model without signing a Salesforce contract, build against it locally, and still have a clear upgrade path if your employer ever needs a million-out-of-a-million reliability guarantee. Compare that to learning a proprietary agent orchestration language that dies the moment the vendor does. Despite their early strides, I am far from convinced LangChain has a long-term future as an AI heavyweight.

Enterprises evaluating agent platforms get governance-native defaults that didn’t exist in this form a month ago. Session tracing, deterministic guardrails, audit logs, and a 60-MCP-tool surface area for free makes the “build it myself” calculus expensive fast. Salesforce is smart to be leaning into pre-provisioning of skill packages, observability templates, and reusable React components. It’s a lego brick approach that the company has long leveraged within their platform for trailblazers, now extended to developers and vibe coding more broadly.

The companies framing this as only an LLM benchmarking race are missing part of the picture. The next phase of AI development is about whose infrastructure can survive a million conversations without blowing a $500K refund on a chatbot hallucination, whose governance compounds instead of breaking under regulation, and whose developer ecosystem builds faster than any competitor’s can be constrained.

Open source is winning at those layers. Not the whole stack. Not yet. But enough that one of the most closed-source-native enterprise incumbents on earth just broke its own twenty-five-year playbook, and DeepSeek shipped the week’s second receipt. Anyone still pricing agent infrastructure like it’s 2023 is going to be surprised by what lands on their desk in 2027. Hell, it’s part of why I joined a company focused on open source AI inference at the end of 2025.

Salesforce just gave every enterprise a reason to evaluate agent platforms with them. They also gave every developer a language to learn that makes them Salesforce-native by default. If the bet works, they strengthen their position as the system of record - instead of being disrupted.

The next two years will tell us if the innovator’s dilemma has truly been inverted.

Shout out to Luca Rossi of Refactoring and the awesome Salesforce comms team (pictured below 😁) for inviting me down for TDX! I had a blast - and learned a lot.

Make sure you’re subscribed to Chain of Thought to get future trip reports, more analysis, and weekly podcasts with top developers and leaders building our agentic future.